In the field of artificial intelligence, international competition is tough. So when researchers from CEA-Joliot and CEA-IRFU challenge start-ups and other companies specializing in AI, we cheer them on. Here’s a success story from the field of MRI reconstruction.

Among the most prominent artificial intelligence approaches, deep learning and its convolutional neural networks (CNN) are of particular interest to developers of magnetic resonance image reconstruction algorithms. Their benefit is twofold: First, artificial neural networks make it possible to recover images of a quality similar to those obtained from complete data sets by collecting only a small fraction of the data, typically one eighth of the total available. Second, these algorithms reconstruct the images much faster than previous methods (10 to 100 times faster). Ultimately, patients reap the benefits as the hospital examination time is tremendously reduced and as such, more people can be scanned on a daily basis.

In the interest of advancing medical technology AI of course attracts the giant digital companies. Facebook AI Research and NYU Langone Health recently launched fastMRI, a collaborative open data research project. Their goal? Assessing the performance of CNNs under development around the world to create a reference benchmark. NYU Langone Health released full raw MRI data sets of the knee in 2018 and of the brain in late 2019. For brain imaging, data was collected from 6,970 individuals, on 11 different Siemens Healthineers scanners, at 1.5 or 3 teslas (43% of the data was collected on 1.5 T MR systems), from five partner sites in the United States. They also mixed several imaging contrasts (T1, T2 or FLAIR weighting) which provided access to additional information on brain tissue. The data are freely accessible (cf. https://fastmri.med.nyu.edu/ for details).

Cosmostat & Parietal : a fruitful collaboration

The release of open access data prompted Zaccharie Ramzi, a doctoral student under the supervision of Philippe Ciuciu (PARIETAL INRIA-CEA team, NeuroSpin, Joliot Institute) and Jean-Luc Starck (Cosmostat, IRFU CEA-CNRS) to establish a comparative analysis of several deep neural networks used in MRI. This benchmark is a concrete application of their common Cosmic project (Compressed Sensing for Magnetic resonance Imaging and Cosmology) which pools the deep learning skills of the two teams to reduce the scan time and speed up image reconstruction without degrading image quality. Cosmostat brings to the project its expertise on the reconstruction of images from large astrophysical surveys such as algorithms, 3D image reconstructions and their representation. The NeuroSpin team, for its part, brings its knowledge in MRI acquisition and in software engineering. The issues to overcome between astrophysical and MRI images are the same: Missing data and background noise that dominates the measurements. This collaboration has already enabled important advances with astrophysical image calculation times reduced by ten after optimization and acquisition times in 2D multislice imaging dropping from 4 minutes to 18 seconds thanks to the compressed strategies implemented on MR systems at NeuroSpin.

The first experience in 2019 on the fastMRI data, solidified the researchers ability to ensure the robustness of pre-existing algorithms and test their own development. When Facebook AI Research and NYU Langone Health announced in Oct 2020,the international “Brain FastMRI Challenge”, aiming at comparing the performance in image reconstruction of different CNN architectures from under-sampled data, corresponding to faster examinations as they could be done in the future, CEA the CEA experts seized the opportunity to work on this challenge.

On October 1st, the competition test data set was released, , artificially under-sampled by a factor (R) 4 or 8, to match 4- or 8-fold shorter scan times. Prior to that, the international community had been able to train its CNN architectures for several months on training and validation data sets in a supervised manner, that is, with knowledge of the fully sampled data. The challenge with test data is of course that no ground truth (or complete data) is available, making the competition blind and thus more challenging yet fair to all contenders. In addition to these two 4x and 8x tracks, the challenge also included an unsupervised transfer learning axis, the aim of which was to test neural networks on data from General Electric or Philips MR systems while they had been trained on Siemens Healthineers data only.

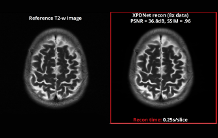

The deadline for submitting results was October 15. The jury proceeded in two stages. First, quantitative metrics were calculated, including the similarity score between the reference images, reconstructed from the complete data only available to the organizers, and those reconstructed by the participants with their algorithms (Structural Similarity - SSIM). Then, the images were visually evaluated by radiologists on specific criteria (artifacts, image sharpness, contrast / noise ratio).

The organizers announced the results of the competition on December 11, 2020, at the Neural Information Processing Systems (NeurIPS) international conference, the annual top-level conference in machine learning. Our NeuroSpin/Cosmostat research trio placed second (runner-up) in the two supervised categories out of 19 competitors. A success all the more deserved as the competition was stiff and against companies as well as academia. An article dedicated to this challenge and co-signed by the organizers of the challenge, in particular Matthew Muckley from Facebook AI Research and Florian Knoll, NYU Langone, as well as the three teams of finalists, will be published in 2021 in the journal IEEE Transactions on Medical Imaging.

The main strengths of the algorithm developed at CEA

The algorithm that Zaccahrie Ramzi has developed contains multiple advances. First of all, it exploits one of the conclusions of the benchmark carried out by the researchers previously (Cf. news June 2020): it is more advantageous to develop hybrid architectures of 2D CNNs, which alternate processing between image space and data acquisition space (also called k-space or Fourier space), than to develop an architecture that works in only one of the two spaces. Then, the algorithm integrates specific treatments to optimize the taking into account of multi-channel data, which allows maintaining the signal-to-noise ratio at a high level.

Moreover, to achieve such performances, the researchers relied on the Jean Zay supercomputer from the “Institut du développement et des ressources en informatique scientifique” (IDRIS). This CNRS High-Performance Computing center has set up a dynamic call for Artificial Intelligence in 2019. Thanks to the support of DRF/D3P (France Boileux-Cerneux and Christophe Calvin), the team was selected. The networks could be trained on GPU partitions with Nvidia V100 card nodes, a reference in the field.

Zaccharie Ramzi's video at NeurIPS conference:

https://slideslive.com/38942421/xpdnet-for-brain-multicoil-mri-reconstruction

References

[1]Cf. news of 06/04/2020 https://joliot.cea.fr/drf/joliot/Pages/Actualites/Scientistiques/2020/Deep-learning-IRM-Standard-comparaison- reseaux.aspx

[2]Cf. news of 02/17/2019 https://www.cea.fr/drf/Pages/Actualites/En-direct-des-labos/2019/IRM-haute-resolution--comment-ne-pas- spend-hours-.aspx

Contacts

• Modelisation, calculation and data analysis › Modeling and visualization methods